Introduction

Analysis of monitoring data from 200 residential solar-storage installations over 12 months reveals a pattern most installers miss. Communication uptime varies significantly across installations. Some systems maintain 99.9% uptime with only brief transient losses lasting seconds. Others operate at 92% uptime meaning communication fails roughly 2 hours per day on average. A third group sits at 85% uptime with 3.5 hours daily of lost communication.

Systems with 99.9% uptime show capacity fade matching normal LFP aging expectations: 1% to 2% loss over 12 months. Systems with 92% uptime show 5% to 10% premature capacity fade beyond normal aging. Systems with 85% uptime show 15% to 20% capacity loss in just 6 months. The difference isn’t random variation in battery quality. These are identical battery models from the same manufacturer installed within weeks of each other. The variable is communication reliability and how each system responds to loss.

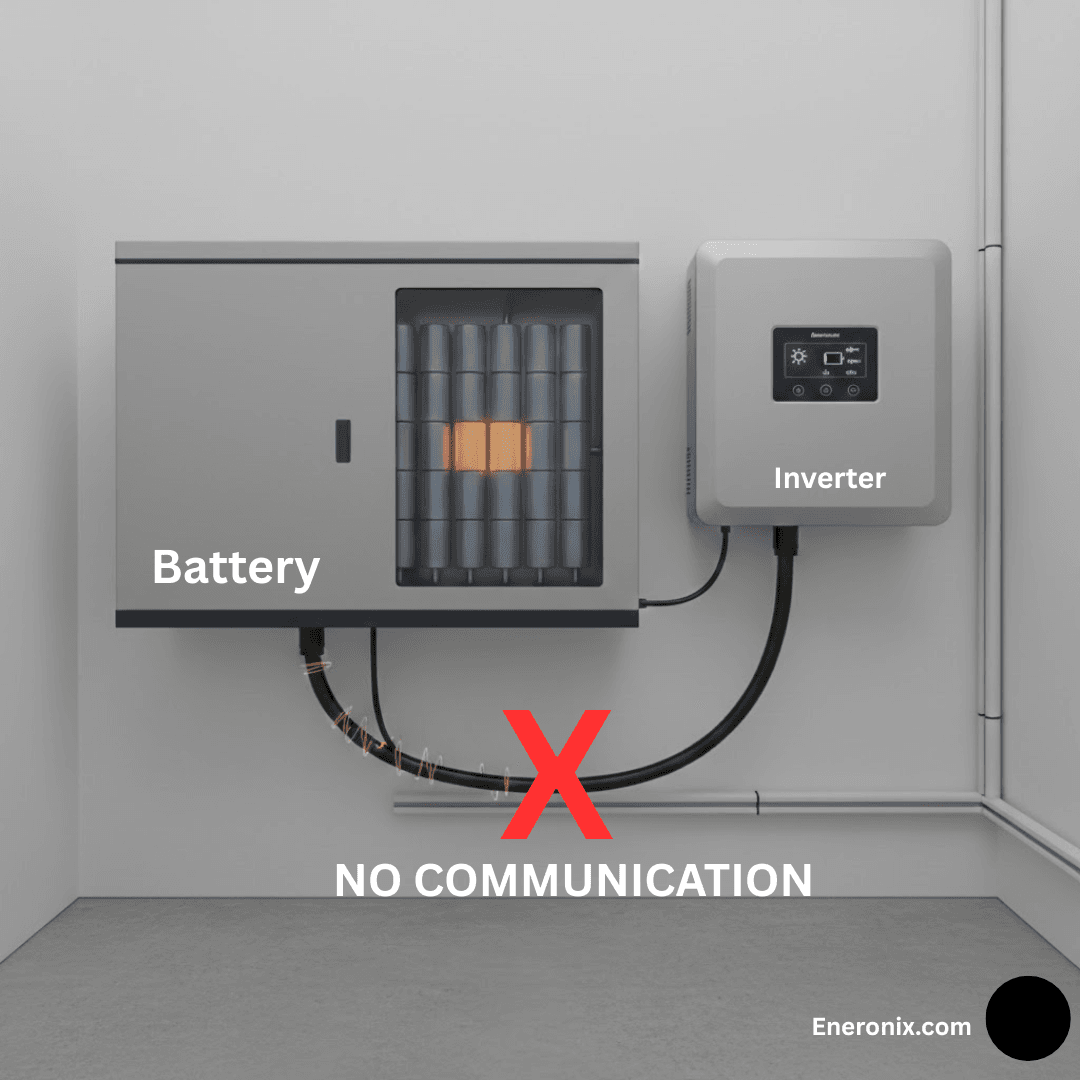

Communication loss triggers one of three response modes. Mode one: BMS opens contactors immediately to protect cells. Mode two: Both devices continue operating with last known values. Mode three: Inverter switches to hardcoded default settings after timeout. Each mode creates a different damage pattern. Contactor cycling wear, stress from operating with stale limits, or chronic mischarge from wrong defaults. The consequences range from obvious and immediate (system offline, contactors damaged) to invisible and slow (premature capacity fade that looks like normal aging).

The industry treats communication as binary: working or broken. This misses the reality that most systems exist in a middle state of marginal reliability. They work well enough that nobody investigates, but poorly enough that batteries degrade faster than they should. Understanding which failure mode your system uses and what damage pattern to expect enables both diagnosis after problems appear and prevention through proper configuration before they occur. This post explains the three modes, their mechanisms, damage timelines, and how to identify which one is killing your battery.

What Triggers Communication Loss

Physical layer failures cause the majority of communication loss events. Screw terminals loosen over time from thermal cycling as batteries heat during charge and discharge then cool at rest. Expansion and contraction works terminals loose over months until resistance increases enough that signal integrity degrades. Connectors in mobile or vehicle installations experience vibration causing intermittent contact. Physical cable damage from being crushed during installation, cut by sharp edges, or chewed by rodents in outdoor enclosures causes complete loss. These failures are usually obvious once discovered but can go unnoticed for weeks if nobody checks communication status regularly.

Termination resistor failure creates intermittent errors rather than complete loss. A 120 ohm resistor can fail open from thermal stress or poor solder joints. The connection to the resistor corrodes over time in humid environments. Without proper termination, signal reflections corrupt messages. Short cables under 2 meters sometimes work anyway. Longer cables fail intermittently with error rates increasing under high EMI conditions. The symptom pattern is communication working fine at low power but failing during high-rate charge or discharge when inverter switching noise peaks.

EMI-induced corruption happens when inverter switching at 20 to 100 kilohertz couples into the CAN cable. High DC currents create magnetic fields that induce voltages in nearby wiring. Messages get corrupted and fail CRC checksums even though the physical connection remains intact. The receiver discards corrupted messages as if they never arrived. Communication loss correlates directly with power level: works at 20 amps, marginal at 50 amps, fails consistently above 80 amps.

Also Read: Inverter Battery Communication Protocols in Modern Solar Systems

Firmware timing issues create asymmetric failures where one device thinks communication is fine while the other declares it lost. BMS watchdog timer set to 10 seconds but inverter watchdog set to 5 seconds means the inverter times out first and takes action while the BMS remains unaware. Message timing drift with temperature causes problems when BMS uses a temperature-sensitive oscillator. Messages transmit at exactly 1000 millisecond intervals at 25 degrees Celsius but drift to 1080 milliseconds at 45 degrees. If the inverter timeout threshold is 1100 milliseconds, the system works at room temperature but fails when hot.

Mode 1: BMS Opens Contactors

The BMS logic is straightforward: cannot communicate means cannot control. If the BMS cannot send updated CVL, CCL, and DCL values, it cannot ensure the inverter respects current cell conditions. A cell might be approaching over-voltage while the BMS screams to stop charging but the message never arrives. Unable to protect through communication, the BMS defaults to the only protection mechanism that works without communication: physically disconnect the battery. Contactors open within 1 to 5 seconds after the watchdog timer expires, typically 10 seconds after the last valid message received.

What happens during disconnect depends entirely on current flow at that moment. If communication fails during idle with zero current, contactors open cleanly with no arcing. If communication fails during 100 amp charge or discharge, the contactors must interrupt that current. An arc forms between the contacts as they separate. Current continues flowing through ionized air until the gap widens enough to extinguish the arc, typically 5 to 20 milliseconds depending on current magnitude and contact separation speed. Each arc erodes microscopic amounts of contact material through vaporization and transfer between contacts.

Damage accumulates with each cycle. After 10 to 20 disconnect events under load, changes are barely measurable. After 20 to 30 cycles, visual inspection shows pitting on contact surfaces. After 30 to 50 cycles, contact resistance increases measurably from initial values under 1 milliohm to 5 or 10 milliohms. At 100 amps flowing through 10 milliohms of resistance, power dissipation equals 100 watts generating significant heat at the contacts. After 50 to 100 cycles, contacts may weld partially closed or fail to close reliably. Beyond 100 cycles, contactor replacement becomes necessary.

Also Read: CAN Bus Physical Layer: The 60-Second Fix for Battery Communication Failures

System availability suffers in grid-tied installations. Battery disconnect causes the inverter to lose its DC source. Depending on inverter topology, some units stop all operation including PV conversion when battery voltage disappears. Solar production stops for 1 to 5 minutes during communication recovery and system restart. If EMI causes communication loss 2 to 3 times per week, that’s 100 to 150 events per year. Each event loses 10 to 30 minutes of potential solar harvest during recovery, totaling hours of lost production annually.

Mode 2: Continue with Last Known State

Both BMS and inverter make optimistic assumptions when communication fails. The BMS keeps contactors closed because local hardware protections still function: over-voltage comparators, under-voltage cutoffs, over-current sensing, and temperature monitoring operate independently of communication. These protections provide a safety net if conditions deteriorate. The inverter continues operating using the last CVL, CCL, and DCL values received, reasoning that conditions probably haven’t changed dramatically in the 10 or 20 seconds since the last message arrived.

This mode works well when communication loss is brief and operating conditions remain stable. An EMI glitch corrupts messages for 5 seconds during mid-charge at 50% SOC. Cell voltages, temperature, and appropriate limits haven’t changed meaningfully in that time. The inverter continues charging at the same current and voltage it was using before communication dropped. Communication resumes, new messages arrive confirming limits are unchanged, and the system never experienced any problem. Hundreds of these transient events can occur with zero impact on battery health or system performance.

Also Read: CVL, CCL, and DCL: Understanding Dynamic Battery Limits in Real-Time

Catastrophic failure happens when BMS state changes rapidly during sustained communication loss. Example: Pack temperature sits at 42 degrees Celsius when communication fails. The BMS had already reduced CCL to 50 amps for thermal protection. Over the next 4 minutes, temperature climbs to 46 degrees as charging continues.

The BMS calculates CCL should drop to 30 amps at 44 degrees, then 10 amps at 45 degrees, then zero amps at 46 degrees as emergency thermal protection activates. None of these updated limits reach the inverter. Charging continues at 50 amps based on the last known value from when temperature was 42 degrees. By the time temperature hits 48 degrees, the BMS is forced to open contactors as backup protection, triggering Mode 1 behavior and contactor damage.

Another failure scenario involves cells approaching over-voltage during the top 5% of charge. Communication fails when the highest cell reads 3.52 volts. The BMS would normally drop CVL progressively: 56.0 volts to 55.5 volts to 55.0 volts to 54.5 volts as that cell approaches 3.60 volts. The inverter continues charging at 56.0 volts, the last known CVL. The cell reaches 3.60 volts or higher before charging naturally completes, accumulating over-voltage stress the BMS tried to prevent.

Mode 3: Hardcoded Default Values

The inverter cannot trust stale data indefinitely. After a timeout period typically ranging from 10 to 30 seconds, the inverter switches from using the last received BMS values to internal default settings pre-configured during installation or set at the factory. This allows the inverter to continue operating with non-communicating batteries like lead-acid or simple lithium packs without any BMS communication capability. The defaults represent supposedly safe values that work across a range of battery types.

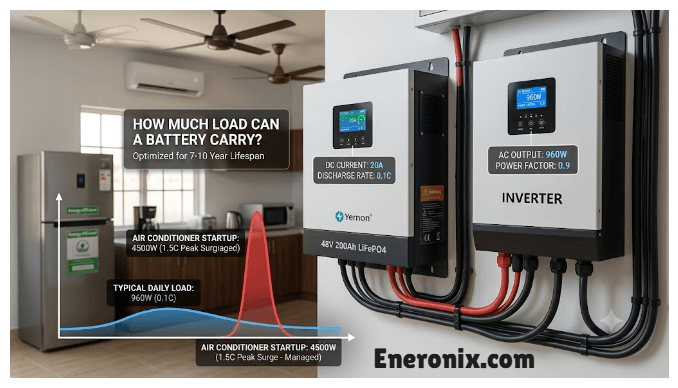

Default values rarely match what the BMS actually wanted. CVL defaults for generic lithium battery mode typically equal 57.6 volts, which corresponds to 3.60 volts per cell for a 16S pack. This comes from LFP cell datasheets listing 3.60 to 3.65 volts as maximum charge voltage. Most BMS implementations use 56.0 volts (3.50 volts per cell) for daily cycling to maximize longevity. The 1.6 volt difference, or 0.1 volts per cell, seems minor but matters significantly for calendar aging. Research shows that operating LFP cells at 3.60 volts versus 3.50 volts increases calendar aging rate by 30% to 50%.

The damage timeline is slow and invisible. Day one: communication fails at 2:30 AM during overnight charging. The inverter switches to default CVL of 57.6 volts and charges to that voltage instead of the BMS-intended 56.0 volts. Cells reach 3.60 volts instead of 3.50 volts. Single event causes minimal measurable damage. Week one: communication remains unfixed because the installer doesn’t know it failed and the customer doesn’t notice any problems. Seven charge cycles complete at 0.1 volts per cell higher than intended.

Month one: 30 charge cycles at elevated voltage. Capacity loss reaches 2% but remains below customer detection threshold. Month three: 90 cycles of daily overcharge produce 5% to 8% capacity loss. Customer notices slightly reduced runtime but attributes it to normal aging. Month six: 180 charge cycles at wrong voltage cause 10% to 15% capacity loss. Customer complains about premature degradation.

Root cause discovery requires checking historical logs. BMS logs show communication fault occurred 6 months ago. Inverter logs show switch to default parameters at the same timestamp. The inverter display probably indicated battery status as normal the entire time because from the inverter perspective, charging completed successfully to its configured voltage. Damage already occurred and cannot be reversed.

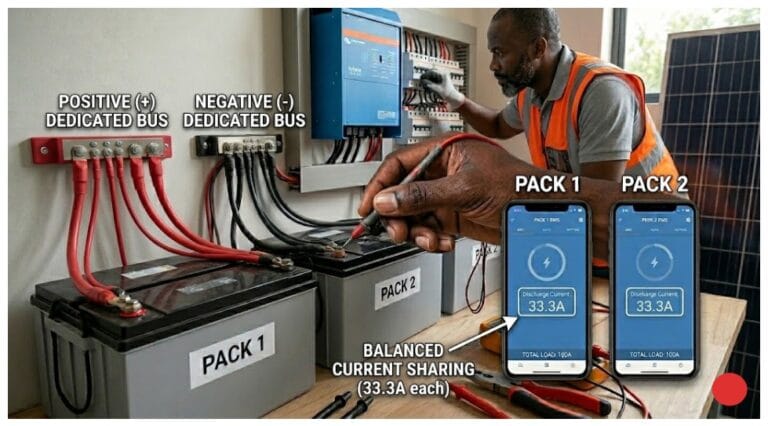

Proper Watchdog Implementation

Coordinated timeout values prevent asymmetric behavior where one device thinks communication is healthy while the other declares it failed. Both BMS and inverter must use the same timeout threshold, typically 10 seconds representing ten times the nominal 1 second message interval. The timeout tolerance should account for realistic timing variation: plus or minus 10% allows messages anywhere from 900 to 1100 milliseconds to be considered valid. If the BMS uses a 5 second timeout but the inverter uses 15 seconds, the BMS might open contactors while the inverter still thinks everything is fine.

Conservative fallback behavior keeps the system operational without causing damage. When the BMS detects communication loss, instead of opening contactors immediately, it drops to conservative limits that are safe regardless of actual cell state. CVL reduces to 54.4 volts, below normal operating voltage but safe for all conditions. CCL and DCL both reduce to 25 amps, approximately 0.1 to 0.15C for typical battery sizes, providing adequate thermal margin. The BMS keeps contactors closed and continues transmitting these conservative limits even though it receives no acknowledgment. When communication resumes, the BMS returns to normal dynamic limit calculation.

The inverter implements matching conservative behavior. Upon detecting communication timeout, instead of switching to hardcoded defaults, it restricts operation to safe minimums. Charge current limited to 25 amps maximum regardless of inverter rating. Discharge current similarly limited. Voltage control uses the last known CVL or 54.4 volts, whichever is lower, preventing overcharge even if the last message was received during high-voltage conditions. Critically, the inverter flags a warning to the user indicating battery communication has degraded, prompting investigation rather than silently continuing with wrong parameters.

This coordinated approach keeps the system available while limiting damage during sustained communication loss. The battery charges and discharges at reduced power but continues serving loads. Conservative limits prevent thermal stress, over-voltage, and over-discharge. User notification ensures the problem gets investigated and fixed rather than ignored. This level of sophistication appears primarily in high-end commercial systems and co-developed products where the same manufacturer controls both BMS and inverter firmware. Residential systems rarely implement proper watchdog coordination due to development cost and market price sensitivity.

Diagnosing Communication Loss After the Fact

Mode 1 systems announce their problems loudly through customer complaints about random shutdowns and offline events. Physical inspection reveals contactor damage if accessible: contacts show visible pitting under magnification, contact resistance measures above 5 milliohms when fresh contacts read under 1 milliohm. BMS logs contain entries showing communication timeout followed immediately by contactor opening commands. Inverter logs record battery disconnect or DC voltage loss events. Frequency matters: weekly occurrences indicate a persistent problem requiring immediate physical layer repair.

Mode 2 systems present subtler symptoms. Customers report the battery shut down with state of charge remaining or charging behavior seems erratic. Many Mode 2 systems generate no complaints at all if communication loss events are brief and conditions remain stable during the outage. Log analysis becomes critical: BMS logs show communication timeout events but contactors remain closed throughout. The BMS may record protection events like over-voltage or over-temperature that the inverter never anticipated because it was operating with stale limits. Damage patterns show occasional over-voltage or thermal stress events rather than chronic problems, with capacity loss of 5% to 10% over 6 months.

Mode 3 diagnosis requires connecting premature capacity fade back to historical communication failures months earlier. Customer complaints focus on faster than expected degradation, typically 10% to 15% capacity loss in 6 months when LFP should lose under 2%. Acute failures are absent. The battery just ages prematurely. Diagnostic measurements at full charge reveal the problem: cell voltages consistently sit at 3.58 to 3.62 volts when properly configured systems should terminate at 3.48 to 3.52 volts. This 0.1 volt elevation indicates charging to default CVL instead of BMS-specified values.

Log correlation provides definitive diagnosis. Search BMS logs for communication fault entries. Find the timestamp when communication first failed. Check whether it was ever restored. Search inverter logs for messages indicating switch to default battery parameters or similar language. Compare the communication fault timestamp to when capacity fade accelerated. Customers often notice reduced runtime starting 2 to 3 months after the communication fault occurred, giving accumulated damage time to become visible. The challenge is that symptoms appear months after cause, requiring detailed log review to connect communication loss in March to capacity complaints in September.

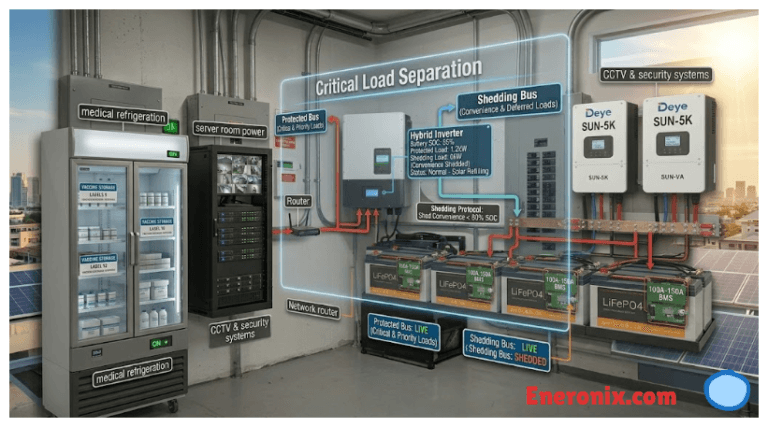

Prevention and Mitigation Strategies

Choose the appropriate failure mode based on application requirements and risk tolerance. Use Mode 1 disconnect behavior when battery replacement cost significantly exceeds downtime cost, such as high-value battery banks in applications with graceful shutdown capability and regular maintenance access for contactor replacement. Cell protection becomes paramount in medical equipment backup or safety-critical systems where battery damage creates unacceptable risk even if availability suffers.

Use Mode 2 continue behavior when brief transient communication loss is expected due to high EMI environments and the BMS implements robust local hardware protection that functions independently of communication. This mode suits applications where availability matters more than perfect cell protection and actual communication loss remains rare, occurring less than 1% of operating time. The key requirement is that local BMS protections like hardware over-voltage comparators and temperature cutoffs can handle worst-case scenarios without inverter coordination.

Configure Mode 3 defaults carefully when this mode cannot be avoided because the inverter offers no other options. Set defaults conservatively to match BMS normal operating parameters rather than using generic values. CVL should equal the BMS typical operating voltage like 56.0 volts instead of the 57.6 volt datasheet maximum. CCL and DCL should be set to 0.3C or lower, providing conservative thermal margin. Verify during commissioning that defaults actually match BMS requirements by temporarily disconnecting communication and observing inverter behavior.

Monitor communication uptime percentage by tracking successful messages received versus expected messages over time. Calculate as successful message count divided by expected message count times 100. Target uptime above 99% as the baseline for healthy systems. Set alert thresholds at 95% uptime to trigger immediate investigation before damage accumulates. Check logs daily for any communication fault events rather than waiting for customer complaints weeks or months later.

Fix root causes immediately when uptime falls below 99%. Physical layer problems like loose terminals, damaged cables, or missing termination cause most failures and are straightforward to repair. Re-torque screw terminals that thermal cycling has loosened. Replace cables showing physical damage. Add or repair termination resistors to achieve proper 60 ohm bus impedance. Reroute cables away from EMI sources when errors correlate with high power operation. Firmware issues are harder to address but manufacturer updates sometimes improve timeout tolerance or fix timing bugs.

The “Mostly Working” Problem

A system operating at 95% communication uptime sounds acceptable at first consideration. Five percent loss equals 1.2 hours per day, 36 hours per month, or 432 hours per year of lost communication. If Mode 3 activates during these loss periods and the default CVL is 1.6 volts too high (57.6 volts instead of the BMS-intended 56.0 volts for 16S), the battery experiences 432 hours annually of overcharge stress at 0.1 volts per cell above specification. Research on LFP calendar aging shows that voltage elevation of this magnitude significantly accelerates degradation.

Capacity impact becomes visible over months. Normal LFP aging under proper conditions produces 1% to 2% capacity loss over 6 months for moderate cycling applications. The same battery with 5% communication loss and incorrect defaults shows 8% to 12% capacity fade in 6 months. The difference of 6% to 10% additional fade is directly attributable to operating with wrong parameters during communication loss periods. The customer sees total degradation but cannot distinguish normal aging from communication-induced damage without detailed analysis.

Installers deprioritize the problem because the system appears functional from a user perspective. Charging completes, discharging powers loads, the inverter display shows normal operation 95% of the time. Customer complaints haven’t started yet because capacity loss remains below the threshold where runtime reduction becomes obvious. Other installations need attention, new jobs require commissioning, and a system working 95% of the time doesn’t trigger urgency. The communication issue gets noted but not immediately resolved.

Reality contradicts the assessment that 95% is good enough. Five percent communication loss causes measurable degradation that accumulates silently over months. Damage becomes permanent and irreversible. By the time capacity fade reaches 10% and customers start complaining 6 to 12 months after installation, the opportunity to prevent damage has long passed. The only remedy is battery replacement under warranty if the manufacturer accepts the claim, or customer dissatisfaction and reputation damage if they don’t.

The standard should be 99% uptime minimum with immediate investigation and resolution of any sustained performance below this threshold. Five percent loss isn’t close enough. The difference between 99% reliable and 95% mostly working is the difference between 10 year battery life and 6 year battery life.

Conclusion

Three failure modes create three distinct damage patterns. Mode 1 disconnect behavior causes obvious immediate problems through system unavailability and progressive contactor degradation from arcing under load. Capacity loss remains minimal because cells are protected, but operational reliability suffers and mechanical components wear out from repeated cycling. Mode 2 continue behavior produces subtle damage that depends entirely on whether operating conditions change during communication loss. Stable conditions cause no harm while rapidly changing temperature or voltage creates accumulated stress from operating with inappropriate stale limits.

Mode 3 hardcoded defaults represents the most dangerous failure mode because damage accumulates invisibly over months. The system appears to function normally with successful charge and discharge cycles while chronically operating outside BMS specifications. Daily overcharge by 0.1 volts per cell seems minor but compounds into significant capacity fade over hundreds of cycles. The damage pattern mimics premature aging, making diagnosis difficult without detailed log analysis correlating communication faults to degradation timelines.

Diagnostic approach requires checking historical logs for communication fault events regardless of whether current symptoms are obvious. Calculate communication uptime percentage over weeks and months to identify marginal reliability that causes cumulative damage. Measure actual CVL during charging by monitoring pack voltage at charge termination and comparing to BMS specifications. Values consistently 1 to 2 volts above BMS settings indicate Mode 3 operation with wrong defaults. Correlate capacity loss acceleration to timestamps of communication failures months earlier.

Prevention focuses on configuration and monitoring rather than hoping communication never fails. Set conservative defaults that match BMS requirements instead of using generic values. Monitor communication uptime actively with 99% as minimum target. Investigate and fix root causes immediately when uptime drops below 99% instead of accepting mostly working as good enough. Choose the appropriate failure mode for your specific application balancing cell protection against availability requirements.

The fundamental insight is that communication loss exists on a spectrum rather than being binary. Systems with 85% to 95% uptime appear functional but degrade batteries significantly faster than systems maintaining 99% uptime. For complete context on communication reliability including physical layer troubleshooting and protocol implementation, see the main guide on inverter battery communication protocols.

I am Engr. Ubokobong Ekpenyong, a solar specialist and lithium battery systems engineer with over five years of hands-on experience designing, assembling, and commissioning off-grid solar and energy storage systems. My work focuses on lithium battery pack architecture, BMS configuration, and system reliability in off-grid and high-demand environments.